Refer to the original paper² for more details.ĪI finally learns to take strides.

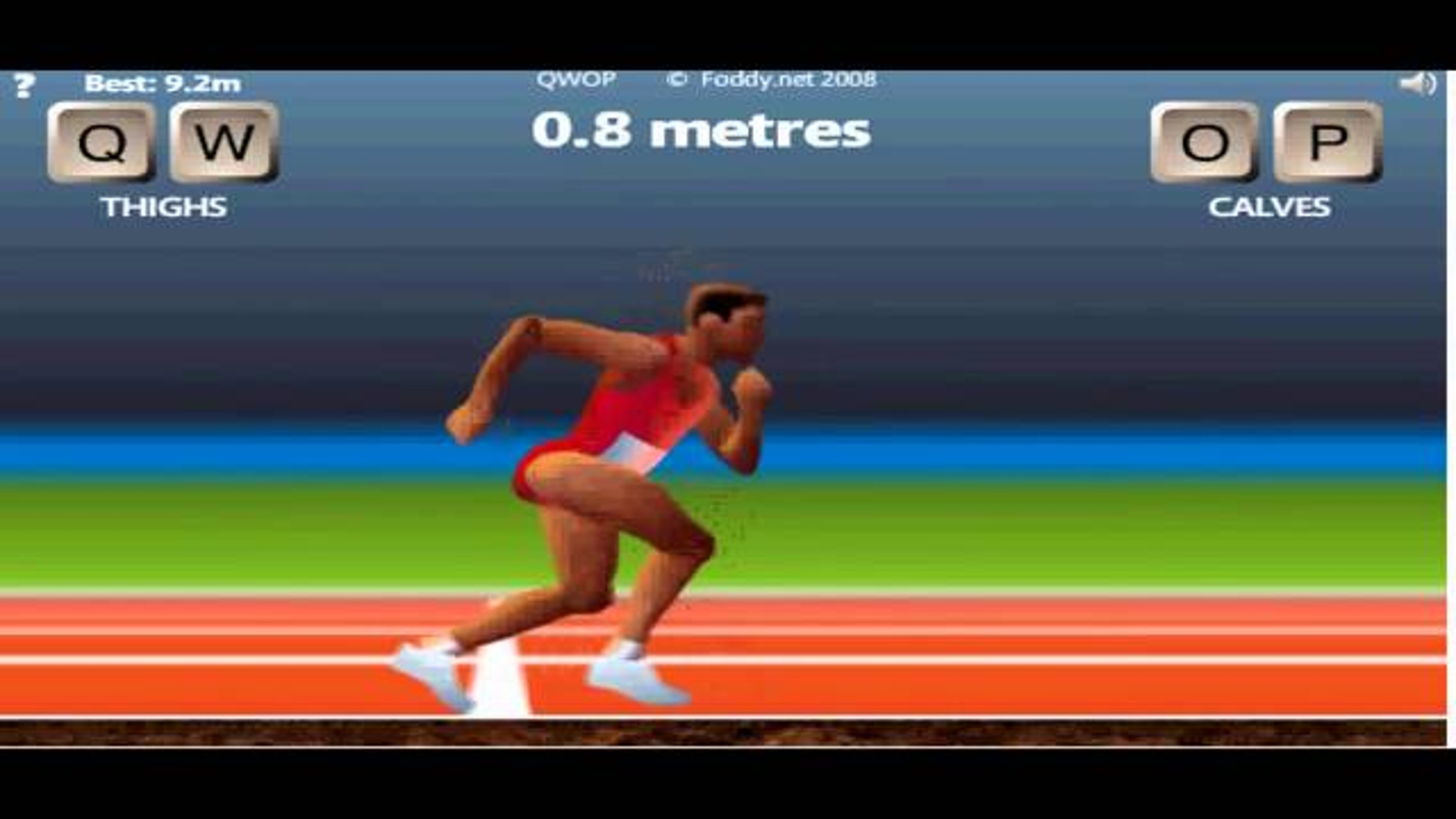

To address the instability of off-policy estimators, it uses Retrace Q-value estimation, importance weight truncation, and efficient trust region policy optimization. learns faster) than its on-policy only counterparts. This allows ACER to be more sample efficient (i.e. This means the agent not only learns from its most recent experience, but also from older experiences stored in memory. This Reinforcement Learning algorithm combines Advantage Actor-Critic (A2C) with a replay buffer for both on-policy and off-policy learning. The first agent’s learning algorithm was Actor-Critic with Experience Replay (ACER)². The action space consisted of 11 possible actions: each of the 4 QWOP buttons, 6 two-button combinations, and no keypress. The state consisted of position, velocity and angle for each body part and joint.

Next, the game was wrapped in an OpenAI gym style environment, which defined the state space, action space, and reward function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed